The content was copied from my previous site I hope it is still up to date

EMC (now Linux CNC), RT-Preempt Linux and sercos III

Update 2017 : Use of real-time kernel preemption was fully integrated into the LinuxCNC. No need for tinkering anymore.

Last change: 10.10.2011

Update: New git repository

Update: New YouTube Video

Introduction

This is a cooperative project from the Institute for Control Engineering of the University of Stuttgart ISW and the company Manz Automation.

Within this project, it is planned to implement functionality for the Enhanced Machine Controller EMC to enable the use of sercos III hardware within EMC. Sercos III is a Ethernet based automation bus https://www.sercos.org.

The first goal is, to bring a machine to operation which uses sercos III drives for automation. The communication handling for the bus is done by a proprietary sercos III master stack based on COSEMA http://cosema.sourceforge.net and a sercos III PCI Card. The stack was adopted to run as UIO-Driver under RT-PREEMPT Linux.

To be able to use sercos III in EMC some functions have to be added, changed, and extended. This page was set up to give some community feedback of the things we have changed, corrected bugs and the experiences we made during the work with EMC.

The intended audience of this page are EMC developers and other developers.

Short overview of the changes to EMC

The linuxrtapi was extended slightly with features to use regular POSIX semaphores.

A shared memory (shm) interface was added as HAL component to enable fast communication with third party programs.

To demonstrate this feature, there is a new SharedMemory machine configuration and an external example program. Both, EMC and the external program exchange data in real-time. It is intended to use the shm interface to be able to talk to a sercos III communication stack and drives connected on the bus.

We currently use a branch built on top of the EMC version 2.4.4 since the RT-Preempt patches from Michael Büsch and Jeff Eppler are still for this version.

Here is the original location of the patch: http://bu3sch.de/patches/emc-linux-rt.

Our work is still in progress and there still lots of things to do. Adoptions, current and probably unfinished changes.

Changelog:

- Patched version 2.4.4 with the RT-Preempt patch

- Added POSIX semaphores to linuxrtapi

- Currently 4 semaphores can be used

- Still more work to do

- Added SharedMemory HAL Module

- Features real-time interaction with 3rd. party programs

- Currently only position and status signals are transfered via shared memory

- Added SharedMemory machine configuration

- External test-program to test the shared memory interface

- Added hal components for composition and decomposition of uints

- constantu32

- compu32xbit

- compxbitu32

Here is the patch:

The patch was created with

git diff v2.4.4..HEAD \> emc\_rt\_shm.patch

UPDATE: Repository is now online at gitorious.org:

Building the patched Version of EMC

This manual assumes that you have Debian squeeze on your computer. If not, you maybe have to modify some of the commands below.

First of all you need to have a Kernel with CONFIG_RTPREEMPT enabled (see Realtime-Linux and Realtime-Preempt-Kernel ).

Getting the source (see also Gettingthesourcewithgit )

$ git clone git://git.linuxcnc.org/git/emc2.git emc2-dev

Checkout v2.4.4 and create a branch to patch it later

$ git checkout v2.4.4 -b 2.4.4-rt-shm

Apply the patch:

$ git apply <path_to_patch>/emc_rt_shm.patch

Compile: There is a temporary build script, which does the necessary steps: (Or alternatively call the commands in the script by hand)

$ cd src

$ ./build.sh

Build the shm tester on a separate shell:

$ cd hal

$ make -f Makefile_shm

Start EMC and load the SharedMemory configuration (as root)

emc

Start the shm tester on a separate shell with real-time priority

$ su root

# chrt 99 ./shm_interface_test

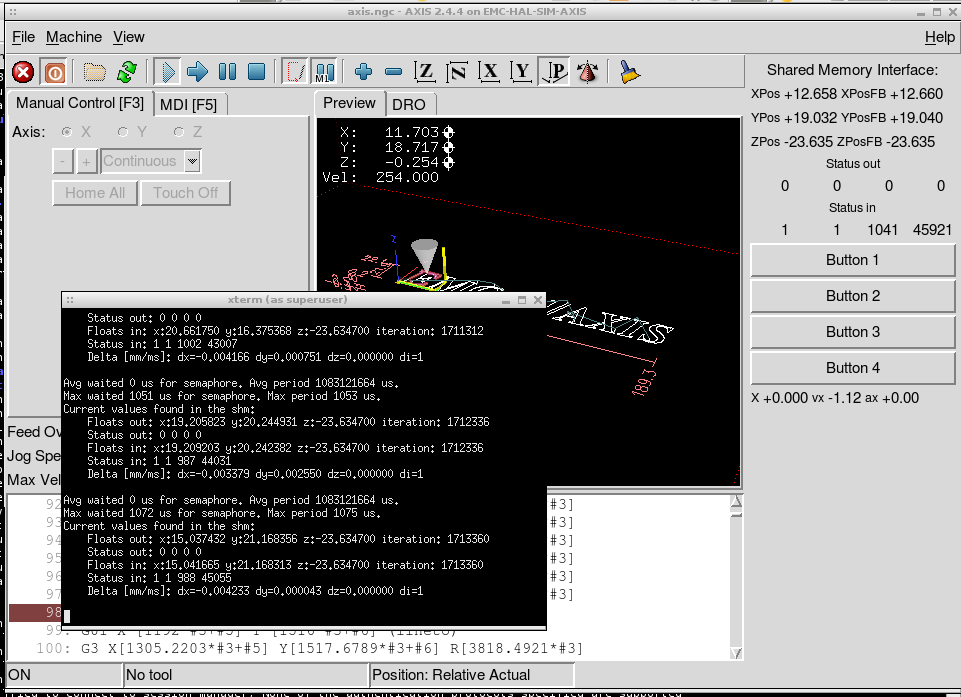

You should see something link this on the output of the shm tester The output shows a bit timing analysis measured over 1024 samples and a snapshot of the current data on the shm interface. The “Delta” line shows the positions which are fed back to the shm. To have at least some kind of simulated hardware behavior I send the position back which was commanded in the last step.

...

Avg waited 996.570312 us for semaphore. Avg period 999.572266 us.

Max waited 1080 us for semaphore. Max period 1083 us.

Current values found in the shm:

Floats out: x:81.011955 y:6.368796 z:–23.634700 iteration: 1499722

Status out: 0 0 0 0

Floats in: x:81.016188 y:6.368796 z:–23.634700 iteration: 1499722

Status in: 1 1 1042 105471

Delta [mm/ms]: dx=–0.004233 dy=0.000000 dz=0.000000 di=1

...

If everything is going right it should look like this:

Update: Demo Video

Here is a demo video at YouTube:

http://www.youtube.com/watch?v=-HgGGhi8aOs

Other interesting stuff

To see the rtapiapp process together with its realtime threads you can use this (I made it while the latency test was running):

...

$ ps H <s>eo pid,pri,rtprio,user,args | grep rtapi

PID PRI RTPRIO USER COMMAND

6021 19</s> root /home/micha/EMC/2.4.4-rt-shm/bin/rtapi_app load

threads name1=fast period1=50000 name2=slow period2=1000000

6021 138 98 root /home/micha/EMC/2.4.4-rt-shm/bin/rtapi_app load

threads name1=fast period1=50000 name2=slow period2=1000000

6021 137 97 root /home/micha/EMC/2.4.4-rt-shm/bin/rtapi_app load

threads name1=fast period1=50000 name2=slow period2=1000000

...

ToDo (Intended):

- Bugfixes

- Review and test all the changes

- Port the enhancements to a newer EMC version

- Improve the extended GUI

- A few .gitinore files are currently deleted to see what is happening

- Improve semaphore implementation

- Some block still warns about missed deadlines

- Correct configuration to use feedback values

- Run only the real-time parts of EMC as root (see build.sh)

-

And of course, get the rtpreempt branch into the official repository

-

DONE: Add multiplexer component to HAL -> put a few bits together to a uint

- DONE: Make the latency tester run correctly on EMC (It shows higher values than my diff variable).

Notes

- Hardware drivers are currently not available.

Latency Testing

There are lots of methods to analyze if your real-time system fits to the requirements of your application. Moreover there are lots of things which can cause that your system is missing deadlines or is handling weird. To get an overview about how your system behaves you may use for example:

-

Cyclictest or one of the other tools from the rt-tests test-suite.

-

The build in latency tester. For example with 1ms cycle time:

$ latency-test 1000000

Tricks

This command shows you all the processes in the system together with all their threads and the corresponding priorities:

$ ps axm o pid,pri,rtprio,cmd

Or alternatively

$ ps H -eo pid,pri,rtprio,user,args

Reduce Priority of other RT Processes

To be sure that your process is the only one with high prority you may change the priority of other processes:

ps H -eo pid,pri,rtprio,user,args | grep 99

PID PRI RTPRIO USER COMMAND

3 139 99 root [migration/0]

14 139 99 root [posixcputmr/0]

15 139 99 root [watchdog/0]

chrt -p 95 3

chrt -p 95 14

chrt -p 95 15

Try again:

./cyclictest -a -n -t -p 99

Known Problems

Hal configuration

I once had the problem, that calling halcmd failed and caused lots of system load. Even “halcmd -V show” caused this behavior. I had to restart to solve the problem.

It seems like the HAL configuration doesn't get deleted after closing EMC, when it is started with setuid from an user account. You have to call halrun from the root account and exit again to solve this problem. This but is probably correlated to the bug above.

To inspect shared memory blocs please use

ipcs -m

and remove them with

ipcrm -M 0x0000cafe

ipcrm -M 0x48414c32

Analyzing Jitter:

There seems to be an issue in measuring the jitter/latency times. I have measured the following latency/jitter times during a test run of the emc latency tester. Every measuring method shows values which differ a lot from the others. (test machine here is a IBM Thinkad T40 Intel® Pentium® M processor 1500MHz with Kernel 2.6.33.7.2-rt30, without further tuning)

The build in latency-test program shows:

Servo Thread: 1ms Max Jitter 261101 ns

Base Thread 50us Max Jitter 101701 ns

The Kernel Latency Tracer shows:

> $ grep -v " 0\$" > /sys/kernel/debug/tracing/latency_hist/wakeup\*/CPU0

>

> Minimum latency: 1 microseconds

> Average latency: 9 microseconds

> Maximum latency: 65 microseconds

> Total samples: 6261331

> There are 0 samples lower than 0 microseconds.

> There are 0 samples greater or equal than 10240 microseconds.

> usecs samples

> 1 1456

> 2 367

> 3 213

> 4 4928

> 5 1884

> 6 427262

> 7 911607

> 8 1043591

> 9 890775

> 10 454103

> 11 1317141

> 12 801684

> 13 85052

> 14 40819

> 15 86971

> 16 118768

> 17 14031

> 18 8163

> 19 5077

> 20 18252

> 21 6621

> 22 3328

> 23 4899

> 24 4439

> 25 3579

> 26 2740

> 27 1394

> 28 474

> 29 263

> 30 298

> 31 128

> 32 110

> 33 116

> 34 202

> 35 238

> 36 93

> 37 71

> 38 67

> 39 61

> 40 7

> 41 7

> 42 3

> 43 18

> 44 4

> 46 2

> 47 1

> 48 5

> 49 1

> 50 2

> 51 1

> 52 1

> 54 3

> 55 2

> 56 2

> 57 1

> 61 1

> 63 1

> 64 1

> 65 3

And my built in latency tracing shows:

New maximum latency of task 1 is 112 at period 1000 us

New maximum latency of task 1 is 113 at period 1000 us

New maximum latency of task 0 is 99 at period 50 us

New maximum latency of task 0 is 101 at period 50 us

In my opinion 261us, 65us and 113us are a lot different from each other. Even if you take different measurement methods into account. This is surely some kind of issue.

A correlated problem is, that the rtapi threads get different priorities and different cycle times. And it seems like they are also sometimes scheduled to run at the same time. I think we have at least give the thread with the smaller period a higher priority.

Contact

Feel free to contact the author via: michael.abel ä ä t isw.uni-stuttgart.de

Regards Michael